Shortly after plunging into this exploration, I stumbled upon the work of Kevin Slavin, once again through CBC's Spark (179). It was a beautiful marriage. Slavin's discussion of 'algorithms as nature' only reemphasized my second motivating impulse: if something as stereotypically 'artificial' as an algorithm is in fact natural or a part of Nature--yes, the reified capital 'N,' Nature--then perhaps humanity had best reconceptualize the machine-human-nature relationship. That is, humanity's view of both 'Nature' and 'Machine' as diametrically opposed 'Others' is a serious hindrance to its self-conceptualization. It isolates the system from both its past (Nature) and future (Machine or hybrid) by making itself the indivisible divider: the Individual. Thus, for the very idea of 'algorithms as nature' to even make sense, one must decentralize their current conception qua relation to the 'world.'

In his Ted talk, Slavin pushes this idea even further. Not only are algorithms beginning to dominate on the internet, in economics, and even in culture, but they are producing in these domains in a way that is incomprehensible to humanity's current perceptions. When changes are thus occurring in the system, we cannot see them, predict them, or understand them. To use my own jargon: as the machinic level of organization gains ever increasing precedence, our need for an integrative approach, a language and framework, in which to operate in this domain is of the utmost importance.

In his Ted talk, Slavin pushes this idea even further. Not only are algorithms beginning to dominate on the internet, in economics, and even in culture, but they are producing in these domains in a way that is incomprehensible to humanity's current perceptions. When changes are thus occurring in the system, we cannot see them, predict them, or understand them. To use my own jargon: as the machinic level of organization gains ever increasing precedence, our need for an integrative approach, a language and framework, in which to operate in this domain is of the utmost importance.

In his Ted talk, Slavin pushes this idea even further. Not only are algorithms beginning to dominate on the internet, in economics, and even in culture, but they are producing in these domains in a way that is incomprehensible to humanity's current perceptions. When changes are thus occurring in the system, we cannot see them, predict them, or understand them. To use my own jargon: as the machinic level of organization gains ever increasing precedence, our need for an integrative approach, a language and framework, in which to operate in this domain is of the utmost importance.

In his Ted talk, Slavin pushes this idea even further. Not only are algorithms beginning to dominate on the internet, in economics, and even in culture, but they are producing in these domains in a way that is incomprehensible to humanity's current perceptions. When changes are thus occurring in the system, we cannot see them, predict them, or understand them. To use my own jargon: as the machinic level of organization gains ever increasing precedence, our need for an integrative approach, a language and framework, in which to operate in this domain is of the utmost importance.

With this problem in hand, I reapproached the discussion of the weavr's feature-set. If the weavr is a tool that can help our growing understanding of the machine-human-nature triad in the context of social mechanisms, it must in some way answer a particular question related to this problem. It must bring an implicit element of this relationship into an explicit manifestation. It must help us 'see.' However, I do not believe the weavr is so simplistic that it can only help us answer one question. Rather, it seems to have the flexibility to answer many depending on the context we impart to it. Thus, this context and its particular implementation is a key point. For the Encodingist, it is the point at which we breath 'life' into the organism. For the Interactivist, it is the framework in which an 'answer' will emerge and grow. And, in its current manifestation, it is more art (implicit) than science (explicit).

To anticipate a future critique: I have no problem with art and some might argue that art has explicit manifestations. It does. My point is just that the ambiguity of a gestalt does not offer the necessary formality needed to develop a framework for the language of the sapiens machina.

Perhaps, on the other hand, the distinction between science and art is a tad crude. Instead, it may be more accurate to say that the weavr needs more structure to better ground its implicit qualities. The goal, after all, when working at this level of abstraction and cross-domain integration (i.e., machine-human-nature) is to utilize the methods of both: to crystallize just enough of the raw potentialities that the underlying emergent qualities remain visible (i.e., do not stagnate in crystallization) but neither do they vanish in the vivid halo of a hallucination. The weavr is unique because it already sits at this intersection. This brings me to my second key point: the 'answer.'

Currently, we can gather a primitive answer by asking the growing weavr various questions, seeing what new emotions and their respective associations it has picked up, examining what it has stumbled upon on the web through its posts, etc. However, most of this is very distant from the original question as it was framed in the context we uploaded into the weavr's system. Words may repeat or appear. Certain vague patterns suggest themselves, but the creature is rather opaque. I lack a way to 'see' into the world of the weavr as it has changed through its interaction with its environment and self. Without giving it a series of questionnaires before and after certain time frames as I might a human, I am left in the dark. Thus, perhaps the construction of the weavr is overemphasizing the 'human' qualities of the system in order to demonstrate their ontology With this emphasis, it is easy to forget the beauty of the underlying formality of any digital structure. The architecture of the code and the digitized information offers the perfect entry point for further formalization qua crystallization of emergent features. In less rigorously formalized systems like humans, this is much harder to accomplish. Thus, we must not forget the 'machine' part of the machine-human-nature interrelation.

On the other hand, it is possible to argue that the questions the weavr answers are not a part of the local system per se, but emerge through the way in which humanity interacts and responds to it a la Jon Ronson. I agree with this, perhaps, as a third point. However, even if this is the case, then there still needs to be a method in which we can examine these features. Perhaps a prosthetic that maps global patterns of behavior across different feature sets (e.g., other prosthetics). Perhaps one that allows a weavr to look through various search engines for reference to itself and comment on it. The more information that is mapped, the greater the number of relations, the better as far as I am concerned.

I choose to shirk the discussion of evaluation or quality because of the necessary context that must be presupposed. If we take information generically in whatever limited way we can, then sheer volume is beneficial as it allows for a multiplicity of contexts. It places less restriction on the questions that might be asked. In this case, more is better. But, this 'more' still requires a method of interpretation: a way to 'see.'

Data visualization is an area of study that fits perfectly into the framework in question. A Ted talk by David McCandless offers an excellent introduction and illustration of the field. In a nutshell, McCandless demonstrates that graphical metaphors offer an incredible way to condense massive amounts of data into comprehensible packages. It offers a clever method to make implicit phenomena blatantly explicit. It allows our natural processes to integrate our visually dominant, human-centric world with one of probabilities, statistics, and mass-scale data patterns: the machinic level of organization. Thus, data visualization or perhaps data design is the perfect language for the future sapiens machina.

Data visualization is an area of study that fits perfectly into the framework in question. A Ted talk by David McCandless offers an excellent introduction and illustration of the field. In a nutshell, McCandless demonstrates that graphical metaphors offer an incredible way to condense massive amounts of data into comprehensible packages. It offers a clever method to make implicit phenomena blatantly explicit. It allows our natural processes to integrate our visually dominant, human-centric world with one of probabilities, statistics, and mass-scale data patterns: the machinic level of organization. Thus, data visualization or perhaps data design is the perfect language for the future sapiens machina.

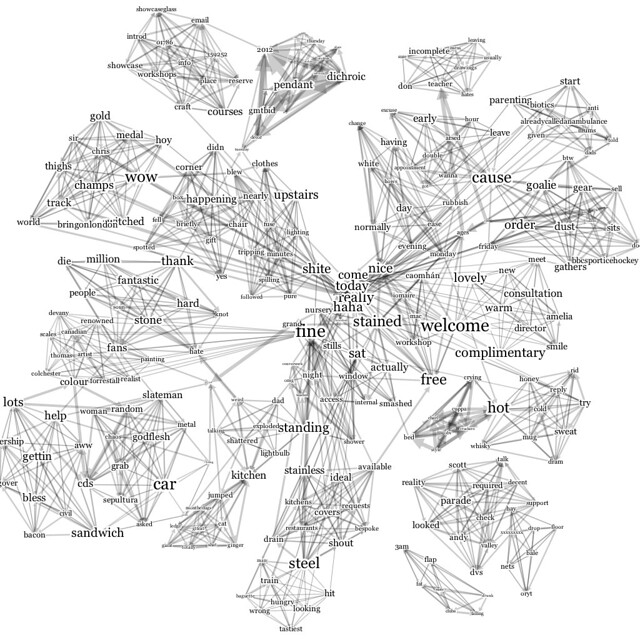

One design framework or technique I commonly use to conceptualize abstract spaces, especially if they are highly interconnected or system-based, is an intersection of graph and set theory. I 'see' nodes and edges or bubbles and lines. Yet, I also see more than that. I 'see' sets of nodes and edges denoted by any type of pattern in the graph (e.g., a node, a cluster of nodes, a clusters of clusters of nodes, an edge, potential edges, external data sets/properties, etc.).

One way in which I could see an implementation of all of the ideas discussed here is through this framework. That is, rather than boxes or categories into which the user drops various words and still further categories that draw the relations between these words, give the user an elementary graph. Let them drop their words into bubbles and connect them via lines to other bubbles. When you want to add sophistication, allow the bubbles to represent clusters of bubbles (i.e., filter or restrict the current domain of inquiry). I like to think of this as collapsing dimensions in phase space. Then, as a second feature, allow the user to check back on this graph in future states and 'see' what new connections have developed or new nodes have appeared. Searching for self-references is but another way to further this map. And, allowing the maps to feed into one another (i.e., accessing another weavr's map through a node) would allow for even larger gradations of abstraction as you move up or down the ladder of organization (i.e., micro or macro patterns). In some way, I can see this as a development of a social semantic web.

To anticipate a future critique: I have no problem with art and some might argue that art has explicit manifestations. It does. My point is just that the ambiguity of a gestalt does not offer the necessary formality needed to develop a framework for the language of the sapiens machina.

Perhaps, on the other hand, the distinction between science and art is a tad crude. Instead, it may be more accurate to say that the weavr needs more structure to better ground its implicit qualities. The goal, after all, when working at this level of abstraction and cross-domain integration (i.e., machine-human-nature) is to utilize the methods of both: to crystallize just enough of the raw potentialities that the underlying emergent qualities remain visible (i.e., do not stagnate in crystallization) but neither do they vanish in the vivid halo of a hallucination. The weavr is unique because it already sits at this intersection. This brings me to my second key point: the 'answer.'

Currently, we can gather a primitive answer by asking the growing weavr various questions, seeing what new emotions and their respective associations it has picked up, examining what it has stumbled upon on the web through its posts, etc. However, most of this is very distant from the original question as it was framed in the context we uploaded into the weavr's system. Words may repeat or appear. Certain vague patterns suggest themselves, but the creature is rather opaque. I lack a way to 'see' into the world of the weavr as it has changed through its interaction with its environment and self. Without giving it a series of questionnaires before and after certain time frames as I might a human, I am left in the dark. Thus, perhaps the construction of the weavr is overemphasizing the 'human' qualities of the system in order to demonstrate their ontology With this emphasis, it is easy to forget the beauty of the underlying formality of any digital structure. The architecture of the code and the digitized information offers the perfect entry point for further formalization qua crystallization of emergent features. In less rigorously formalized systems like humans, this is much harder to accomplish. Thus, we must not forget the 'machine' part of the machine-human-nature interrelation.

On the other hand, it is possible to argue that the questions the weavr answers are not a part of the local system per se, but emerge through the way in which humanity interacts and responds to it a la Jon Ronson. I agree with this, perhaps, as a third point. However, even if this is the case, then there still needs to be a method in which we can examine these features. Perhaps a prosthetic that maps global patterns of behavior across different feature sets (e.g., other prosthetics). Perhaps one that allows a weavr to look through various search engines for reference to itself and comment on it. The more information that is mapped, the greater the number of relations, the better as far as I am concerned.

I choose to shirk the discussion of evaluation or quality because of the necessary context that must be presupposed. If we take information generically in whatever limited way we can, then sheer volume is beneficial as it allows for a multiplicity of contexts. It places less restriction on the questions that might be asked. In this case, more is better. But, this 'more' still requires a method of interpretation: a way to 'see.'

Data visualization is an area of study that fits perfectly into the framework in question. A Ted talk by David McCandless offers an excellent introduction and illustration of the field. In a nutshell, McCandless demonstrates that graphical metaphors offer an incredible way to condense massive amounts of data into comprehensible packages. It offers a clever method to make implicit phenomena blatantly explicit. It allows our natural processes to integrate our visually dominant, human-centric world with one of probabilities, statistics, and mass-scale data patterns: the machinic level of organization. Thus, data visualization or perhaps data design is the perfect language for the future sapiens machina.

Data visualization is an area of study that fits perfectly into the framework in question. A Ted talk by David McCandless offers an excellent introduction and illustration of the field. In a nutshell, McCandless demonstrates that graphical metaphors offer an incredible way to condense massive amounts of data into comprehensible packages. It offers a clever method to make implicit phenomena blatantly explicit. It allows our natural processes to integrate our visually dominant, human-centric world with one of probabilities, statistics, and mass-scale data patterns: the machinic level of organization. Thus, data visualization or perhaps data design is the perfect language for the future sapiens machina.One design framework or technique I commonly use to conceptualize abstract spaces, especially if they are highly interconnected or system-based, is an intersection of graph and set theory. I 'see' nodes and edges or bubbles and lines. Yet, I also see more than that. I 'see' sets of nodes and edges denoted by any type of pattern in the graph (e.g., a node, a cluster of nodes, a clusters of clusters of nodes, an edge, potential edges, external data sets/properties, etc.).

One way in which I could see an implementation of all of the ideas discussed here is through this framework. That is, rather than boxes or categories into which the user drops various words and still further categories that draw the relations between these words, give the user an elementary graph. Let them drop their words into bubbles and connect them via lines to other bubbles. When you want to add sophistication, allow the bubbles to represent clusters of bubbles (i.e., filter or restrict the current domain of inquiry). I like to think of this as collapsing dimensions in phase space. Then, as a second feature, allow the user to check back on this graph in future states and 'see' what new connections have developed or new nodes have appeared. Searching for self-references is but another way to further this map. And, allowing the maps to feed into one another (i.e., accessing another weavr's map through a node) would allow for even larger gradations of abstraction as you move up or down the ladder of organization (i.e., micro or macro patterns). In some way, I can see this as a development of a social semantic web.

In case this idea interests, there are a variety of python graph APIs that might be useful. This site has a link to a bunch of them. Not all have visualization features.

Pictures courtesy of:

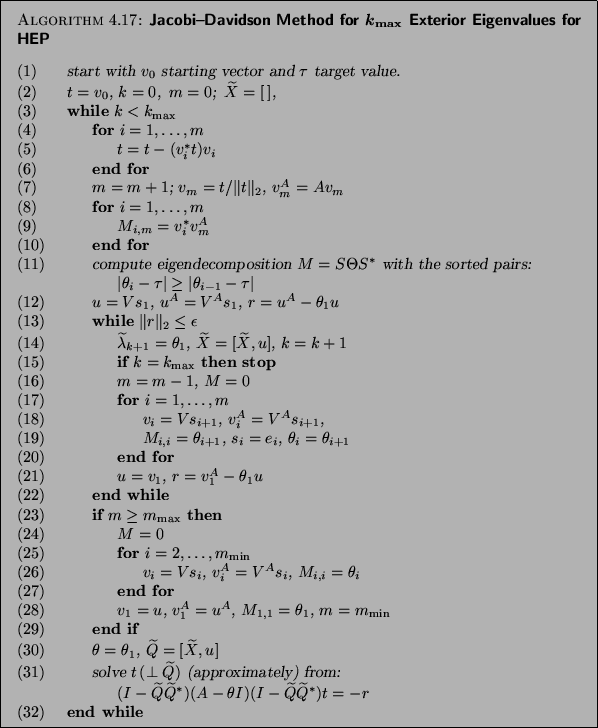

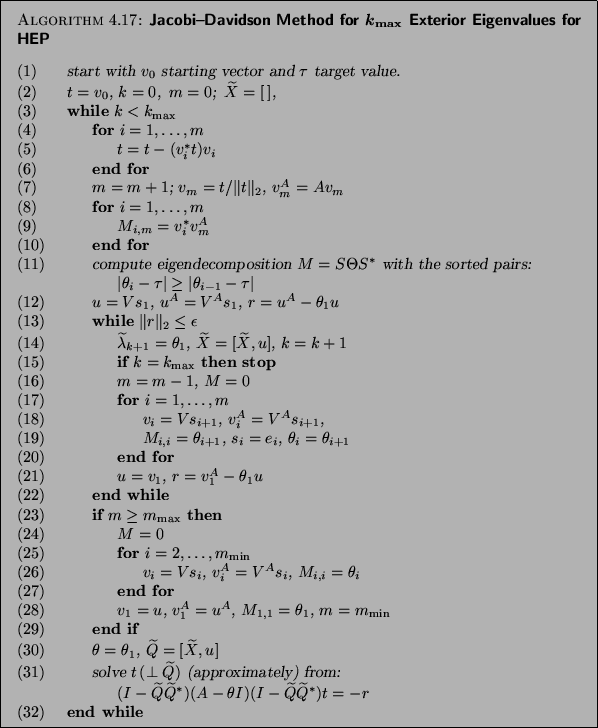

http://web.eecs.utk.edu/~dongarra/etemplates/node144.html

http://www.international.ucla.edu/calendar/showevent.asp?eventid=6591

http://2010.sonicacts.com/programme/session9-gardeners-of-the-future/

http://www.orthocuban.com/2009/05/on-venn-diagrams-and-commonalities/

http://www.informationisbeautiful.net/visualizations/

http://www.international.ucla.edu/calendar/showevent.asp?eventid=6591

http://2010.sonicacts.com/programme/session9-gardeners-of-the-future/

http://www.orthocuban.com/2009/05/on-venn-diagrams-and-commonalities/

http://www.informationisbeautiful.net/visualizations/